The team behind the popular PlayStation 3 emulator RPCS3 have seen a rise in what they say are "AI slop code pull requests".

We've seen other open source projects face similar issues, the rise of AI bots and coding agents has caused waves of people submitting all sorts of random junk everywhere they possibly can. The Godot Engine team previously complained about the same issue, as have lots of others.

Writing on X the RPCS3 team said:

Please stop submitting AI slop code pull requests to RPCS3. We will start banning those who do without disclosing. There are plenty of resources online to learn how to debug and code instead of generating slop that you don't understand and that doesn't work.

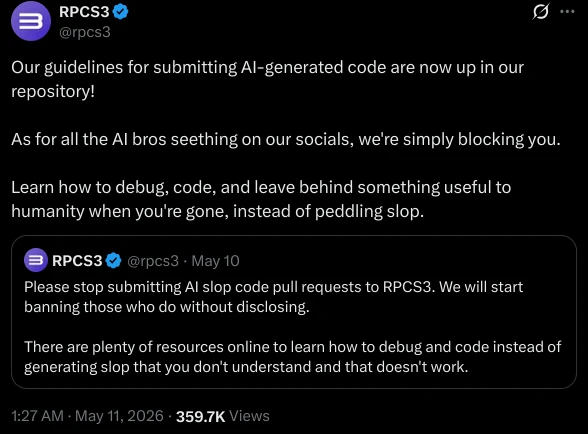

In a follow-up post they additionally said:

Our guidelines for submitting AI-generated code are now up in our repository!

As for all the AI bros seething on our socials, we're simply blocking you.

Learn how to debug, code, and leave behind something useful to humanity when you're gone, instead of peddling slop.

They're not actually against AI code, but people submitting requests that are likely 100% AI generated without the submitters understanding what it does - or even worse, the code just being useless untested junk. Their new guidelines as stated on GitHub:

Use of AI tools for research and reverse engineering purposes is permitted. However, contributors are expected to fully own and understand all code they submit. Any communication with the team — including code, code comments, and GitHub comments — must come from the human contributor, not an AI agent acting autonomously.

We have unfortunately seen a rise in untested and unverified AI-generated slop being submitted to this project. This wastes maintainer time and, in worse cases, such changes get merged and break functionality for all users. Repeated violations will result in a ban from the repository. Please be respectful of everyone's time.

Pull requests opened by AI agents or automated tools must include a disclosure in the PR description stating the scope of AI involvement — which parts were AI-generated and what human testing or review was performed prior to submission. PRs that omit this disclosure may be closed without review.

If you are unsure about your work, open a discussion issue to talk it through with the team, or reach out to a maintainer on Discord.

Not even speaking about situations like Github writing "co-authored by Copilot" on non LLM PRs.

The mind behind this idea is great and I would support it, but the real world situation just shows that it harms more than it actually helps. The FOSS-community needs to think about a better solution.

Last edited by PlayingOnLinuxphone on 12 May 2026 at 2:01 pm UTC

Quoting: awfulsauceIt appears one of my worst fears when it comes to FOSS and AI is coming into fruition.AI makes a lot of wannabe developers lazier because they don't try to understand what results they're getting from it and think that's the be all end all to solving the problem.

Quoting: PlayingOnLinuxphoneThat idea exists for decades and all times it was tried ended up in banning potential serious developers. I mean, just give a look how Reddit moderators do their job. We all heard about the horror stories of people getting banned for non harmful content if it just contains a minimum amount of critics to a topic. If I can ban you in the network, you are banned everywhere, not just on my project, even if I did not ban you for AI usage, I just need to tell you used AI.That was then, this is now. I think the whole situation needs a rethink. It's not a coincidence that multiple project have to create AI policies due to slop. Tools like Openclaw make this kind of thing very hard to counteract, because the AI won't give up - ban it, and it'll just create a new account and re-submit, over and over.

Not even speaking about situations like Github writing "co-authored by Copilot" on non LLM PRs.

The mind behind this idea is great and I would support it, but the real world situation just shows that it harms more than it actually helps. The FOSS-community needs to think about a better solution.

I suspect that the only way to truly police this, ultimately, is using a system like StackExchange, where pull/merge requests simply aren't allowed - you go through a step-ladder of ever-so-slightly-increasing rights, as your reputation rises, until, eventually, you are trusted to contribute within the boundaries of the policies of that project.

Sadly the infrastructure for this doesn't exist. Yet.

How to setup OpenMW for modern Morrowind on Linux / SteamOS and Steam Deck

How to setup OpenMW for modern Morrowind on Linux / SteamOS and Steam Deck How to install Hollow Knight: Silksong mods on Linux, SteamOS and Steam Deck

How to install Hollow Knight: Silksong mods on Linux, SteamOS and Steam Deck