Oh deary me, NVIDIA have a bit of a wildfire on their hands here, with NVIDIA DLSS 5 being compared with AI generated slop "art".

Perhaps lumping together their previous rather good upscaling and frame generation tech, with something else entirely that completely changes the faces of characters was not the best idea huh? Who could have seen this coming? Apparently not NVIDIA.

NVIDIA are now doing a little damage control, posting in the replies of their own video with a pinned comment that notes:

Important to note with this technology advance - game developers have full, detailed artistic control over DLSS 5's effects to ensure they maintain their game's unique aesthetic. The SDK includes things like intensity, color grading and masking off places where the effect shouldn't be applied. It's not a filter - DLSS 5 inputs the game’s color and motion vectors for each frame into the model, anchoring the output in the source 3D content.

Even Bethesda are doing some damage control of their own too, as Starfield was one of the games being recently shown off with a post on X/Twitter in reply to Digital Foundry noting:

Appreciate your excitement and analysis of the new DLSS 5 lighting here. This is a very early look, and our art teams will be further adjusting the lighting and final effect to look the way we think works best for each game. This will all be under our artists’ control, and totally optional for players.

Across the internet, it seems a whole lot of people and game developers have begun (rightly so) absolutely ripping into NVIDIA for DLSS 5 and what it's doing to game visuals. NVIDIA say it's "not a filter", but it's hard not to laugh at how it changes character faces into what looks exactly like you would imagine generative AI beautification looksmaxxing tools would do. Or, yassifying, if you will. I'm learning a lot of new silly words thanks to this. This is the kind of stupid AI generative filtering I would have expected from some sort of AI porn generation website, not from the likes of NVIDIA. Who cares about art direction when you can plump up the lips of a character right?

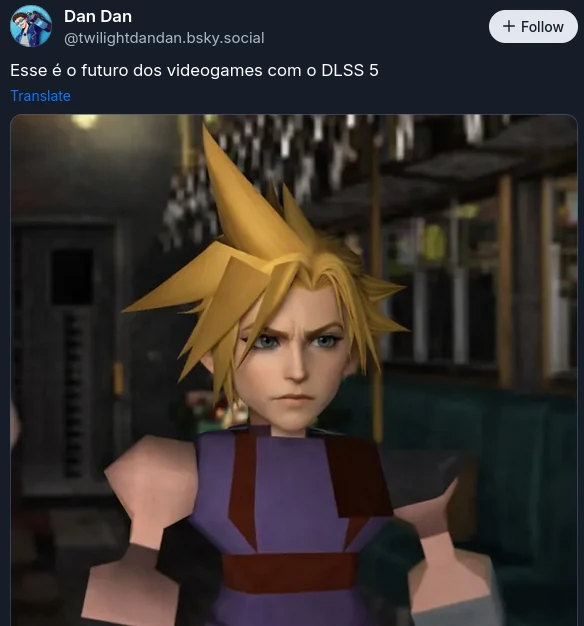

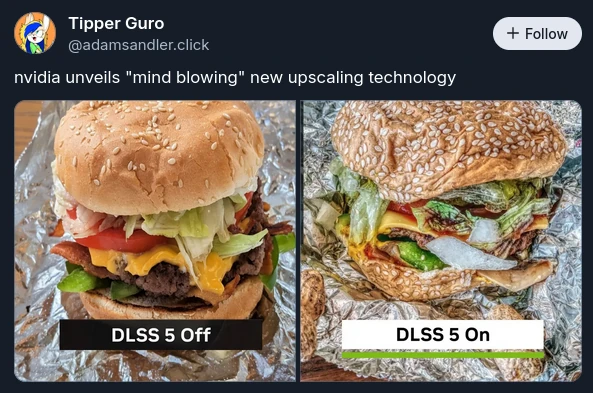

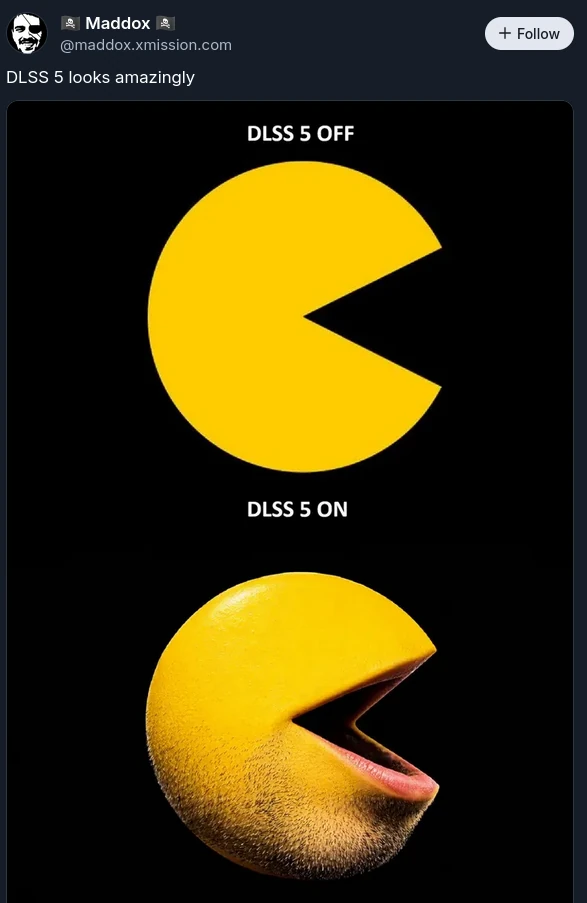

Some favourite funnies from the situation include:

I feel like I could go on forever, as I keep chuckling away at NVIDIA creating what is possibly one of the funniest video-game related meme templates recently.

Anyway, here's the video if you missed all the fun:

Direct Link

Thanks NVIDIA, I hate it.

Quoting: alka.setzerNvidia explicitly said that they were running two 5090, one for rendering the other for DLSS 5 processing so that they could maintain fluid fps on the demos. They also said they had DLSS 5 running in a single card on the lab (didn't said which, could be a rtx 6090). DLSS 5 is supposed to come out on Q4.That still doesn't really answer it. As I said before, the performance issue could be the result of changes that are needed conflicting with other aspects of the pipeline. DLSS runs on the tensor cores, so running it on a second card means the tensor cores on the primary card are doing nothing, unless they're actually running the upscaling/frame gen split off from this stuff because of conflicts/bugs.

If they've only just gotten it working on a single card in lab settings (the 60 series isn't coming until the end of 2027 at the earliest, so it's not that) to me that would indicate something besides raw computational cost or they'd never be able to get it out by Q4 for anything except a 5090.

My guess is there are either bugs that cause it to interfere with upscaling or they just haven't been able to get the newer model to fit in the cache of the tensor cores.

Last edited by GoEsr on 17 Mar 2026 at 8:57 pm UTC

Instead we saw exaggerated features, that didn't align with the original character, that required 2 5090s.

Quoting: kit89If they had taken high fidelity renderings of the characters provided by the artist (doesn't need to be photoreal), and showed it running on a 4060 where the low definition character was replaced with the high definition version, then I think that would have been impressive.ai cores on nvidia GPUs take so much space, that if they were removed and replaced with rendering cores, there might not be any need of upscaling in the first place. Why do you think nvidia went for that ridiculous design? the answer is simple: scaling production. it is cheaper for nvidia to produce the same gpus for both datacenters and individual consumers, and ordinary gamers are paying the price for it since 2019. dlss 4.5 with transformer model for sure is a good upscaling method, but it largely doesn't have anything to do with ai cores, except pixel filtering to mitigate ghosting, that's all that ai cores do in the chain of denoise technique.

Instead we saw exaggerated features, that didn't align with the original character, that required 2 5090s.

Quoting: GustyGhostIf this takes off, you just know the mentality of mesh animators and modelers will transform into "I don't need to worry about that awkward proportion / refine that unconvincing motion, it's just going to get covered over with an AI post-processing pass anyway."This is most people doesn't understand yet, the "we will allow developers/artists to have control and make adjusts" is just a fallacy.

Developers doesn't optimize after DLSS/XESS/FSR

Developers doesn't optimize X2 after FrameGen

Developers are leaving raster fallback behind after Ray Tracing

What do you think they will do after Dall-ESS5??

Quoting: JarmerWhich is just so misogynistic.I haven't seen this mentioned nearly enough, it's enforcing instagram and tiktok filter beauty standards. It gave Grace lip injections. There are so many layers as to why this is awful. I miss the pre-AI world.

Quoting: discocatUsually when you announce a product, you try to show it in the best light, so my take on all this is that what they showed here (heavily edited result) is what they're the most proud of.

Nvidia generated all that content, those videos, those examples, being super duper proud of it like it was the best thing ever. They're genuinely convinced it's awesome.

Quoting: Stellaand completely deserved too. Nvidia itself has become a meme

Quoting: JarmerWOW that looks absolutely horrid. Their heads are so far up their own asses they couldn't tell? And then went on to be PROUD of that ...?Based on what I saw in that YouTube video, I thought it looked awesome and has enormous potential... And I can't stand NVIDIA as a company!

Surely I'm not the only one?

Quoting: melkemindThat's why the game characters end up looking like Instagram models.That's a bad thing? 😂

Quoting: BrokattI sincerely hope AMD and Intel won't follow but something tells me they can't help themselves.Nah, I look forward to this - as long as it suits the particular game type! For example, it would make little sense to have such realism for say, Palworld... But it would be fantastic to see this implemented in The Last of Us.

Quoting: Jarmeras others have said, it 100% looks fake pornified. Which is just so misogynistic.Again, that's a bad thing? 🤣

In all seriousness though, you're blowing this way, waaay out of proportion... This is no way "pornified"; even using the "Instagram models" comment above is grasping at straws, to be honest.

Quoting: EhvisDid they really pay off a bunch of studios for demo games so that those 7 people in the world that have a spare 5090 can use it?

Quoting: alka.setzerNvidia explicitly said that they were running two 5090, one for rendering the other for DLSS 5 processing so that they could maintain fluid fps on the demos. They also said they had DLSS 5 running in a single card on the lab (didn't said which, could be a rtx 6090). DLSS 5 is supposed to come out on Q4.

Quoting: kit89that required 2 5090s.Meh. This will trickle down to us peasants in the coming years... It's a sign of what you can have now if you're rich, and what us peasants should expect in the future.

Quoting: KapelliniI haven't seen this mentioned nearly enough, it's enforcing instagram and tiktok filter beauty standards.Good lord, here we go...

Quoting: Cyba.CowboyNah, I look forward to this - as long as it suits the particular game type! For example, it would make little sense to have such realism for say, Palworld... But it would be fantastic to see this implemented in The Last of Us.Each to his own. I think I prefer to play a game, see a movie or listen to music the way the artists intended. Not have an AI interpret it for me. I'm not interested in having a Tiktok beauty filter over my games.

Quoting: Wolfgang RoseIt looks impressive, but its NVIDIA... I'm on a 4070 at the moment and my upgrade cycle is 3-5 years away based on what I play. This will be my last NVIDIA card though, I'm not going to support a company that is actively trying to kill the pass-time I have had almost entire life.Yep. I'm still on a 1060 and finally hitting the point where I need to upgrade soon. I've always been a GeForce guy, but not anymore. The build I start within the next few months will definitely contain a Radeon.

Last edited by Expalphalog on 19 Mar 2026 at 4:48 pm UTC

Last edited by Mambo on 22 Mar 2026 at 11:37 am UTC

Quoting: robvvYikes :-o I play games to get away from photo-realism!Nah, I think for certain games it would be awesome... For example, if you had a game like The Last of Us or Red Dead Redemption, photo-realism would be great; but for a game like Palworld orHollow Knight, it would make absolutely no sense.

As long as they only use stuff like this where it is relevant it could be good.

Quoting: MamboExhibit "A" of where this sort of technology would make absolutely no sense.

Quoting: Cyba.CowboyThe thing you need to be aware of is that photo-realism and realistic are not necessarily the same thing. Take movies for instance (especially those from a time where they still took great pride from art direction). These are filmed, so by definition photo-realistic. However, everything you see on screen from people, lighting, atmosphere to color grading was created with intent. This is not necessarily realistic, but it makes the scene better. This is how it should be and this is also how games should be made. This tech is not benefiting any of that.Quoting: robvvYikes :-o I play games to get away from photo-realism!Nah, I think for certain games it would be awesome... For example, if you had a game like The Last of Us or Red Dead Redemption, photo-realism would be great; but for a game like Palworld orHollow Knight, it would make absolutely no sense.

As long as they only use stuff like this where it is relevant it could be good.

How to setup OpenMW for modern Morrowind on Linux / SteamOS and Steam Deck

How to setup OpenMW for modern Morrowind on Linux / SteamOS and Steam Deck How to install Hollow Knight: Silksong mods on Linux, SteamOS and Steam Deck

How to install Hollow Knight: Silksong mods on Linux, SteamOS and Steam Deck